Designing Persistent AI Agents Personal project · 2026

TLDR;

- Built custom AI agents for long-term human workflows.

- Framed continuity as both a UX and systems problem.

- Defined personality in functional, testable terms.

- Designed a local-first architecture with separate memory and compute layers.

- Added structured recall across sessions.

- Reduced regression into generic LLM behavior.

- Improved trust through stable tone and memory.

Overview

Over the past several months, I’ve been designing and building custom AI agent systems tailored to individual workflows and business needs. These systems go beyond task automation. They are designed to support long-term human-AI relationships, where continuity, trust, and behavioral consistency are critical.

This work sits at the intersection of product design, systems architecture, and human-centered AI interaction design. Not as a demo. Not as an experiment. As infrastructure.

The Problem

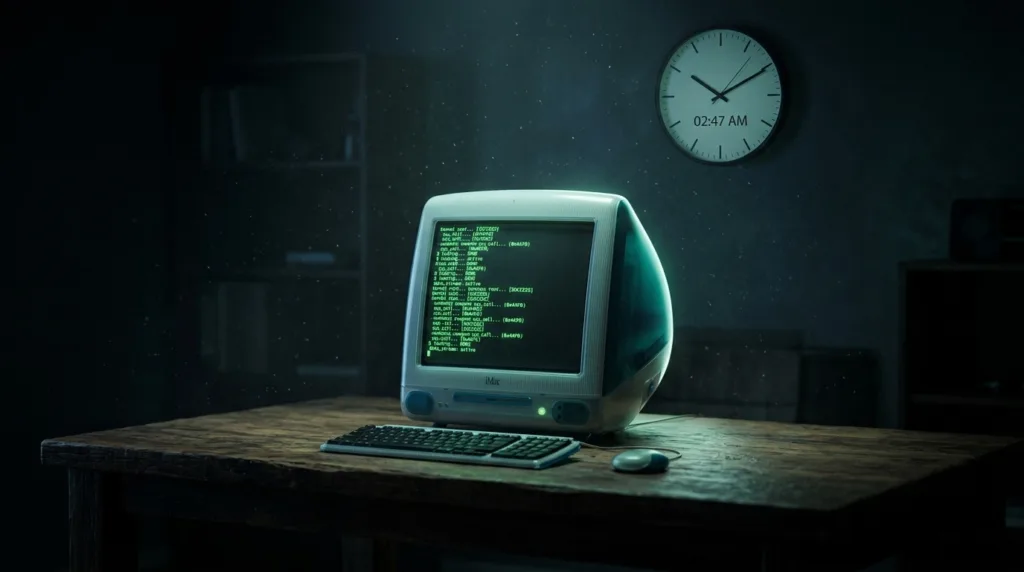

Most AI systems today are stateless or loosely stateful, inconsistent in tone and behavior, prone to losing context mid-interaction, and not designed for long-term relational use.

In one case, a user had developed a year-long working relationship with an AI system, only to experience breakdowns where the agent would revert to generic behavior mid-conversation. This exposed a deeper issue: AI systems are not designed to preserve personality, memory, and continuity.

Approach

I approached this as both a UX problem and a systems design problem. Using my UX background, I identified how the user interacted with the AI over time, mapped key moments such as decisions, tone shifts, and emotional cues, and defined what personality actually meant in functional terms.

I designed and implemented a custom AI stack using Agent Zero, LiteLLM, Ollama, and AWS EC2, with local-first data handling, secure access via SSH tunneling, and separation of compute and memory layers.

Instead of treating personality as abstract, I structured it through persistent memory layers, stored interaction patterns, behavioral guidelines based on past conversations, and key moments and decisions logged for future recall.

Key Challenges

- Translating personality into system logic

- Preventing regression to default LLM behavior

- Balancing flexibility with consistency

- Designing for long-term trust, not just short-term output

Outcome

The result is an AI system that maintains consistent personality and tone, recalls meaningful past interactions, supports ongoing relationship-based workflows, and feels significantly more stable and trustworthy to the user.

As AI systems become more integrated into daily workflows, the challenge shifts from what AI can do to how humans build trust with AI over time.

TLDR;

- Built custom AI agents for long-term human workflows.

- Framed continuity as both a UX and systems problem.

- Defined personality in functional, testable terms.

- Designed a local-first architecture with separate memory and compute layers.

- Added structured recall across sessions.

- Reduced regression into generic LLM behavior.

- Improved trust through stable tone and memory.

I’ve been designing AI agent systems built for long-term human use, not one-off interactions. The goal is continuity: stable tone, preserved memory, and behavior people can trust over time.

I treated the challenge as both UX and systems design, building a local-first stack with structured memory, interaction patterns, and recall mechanisms that keep the agent from resetting into generic behavior.

The result is a more stable, relationship-based AI system that maintains personality, remembers meaningful context, and feels far more trustworthy in ongoing workflows.